Contents:

AI has taken root, causing markets to grow faster and higher. Competition is fierce, and it is anyone’s game. Frustratingly, the relentless barrage of AI hype cycle marketing confounds the playing field. Your business needs something new and innovative to maintain its place in the race, but you need to know what is actually worth betting on.

The mission of this article is to arm you with valuable, actionable insights about AI trends based on my 8+ years of experience working with AI. Let’s discover the key artificial intelligence trends in 2026.

State of the Artificial Intelligence Market in 2026

There are a few factors playing a key role in the continued growth of spending and investment in AI capabilities.

- Nations now view AI, particularly the prospect of artificial general intelligence (AGI), as a strategic resource and a national security risk.

- Increased energy demands are motivating enterprises to seek more powerful and efficient energy solutions to support AI.

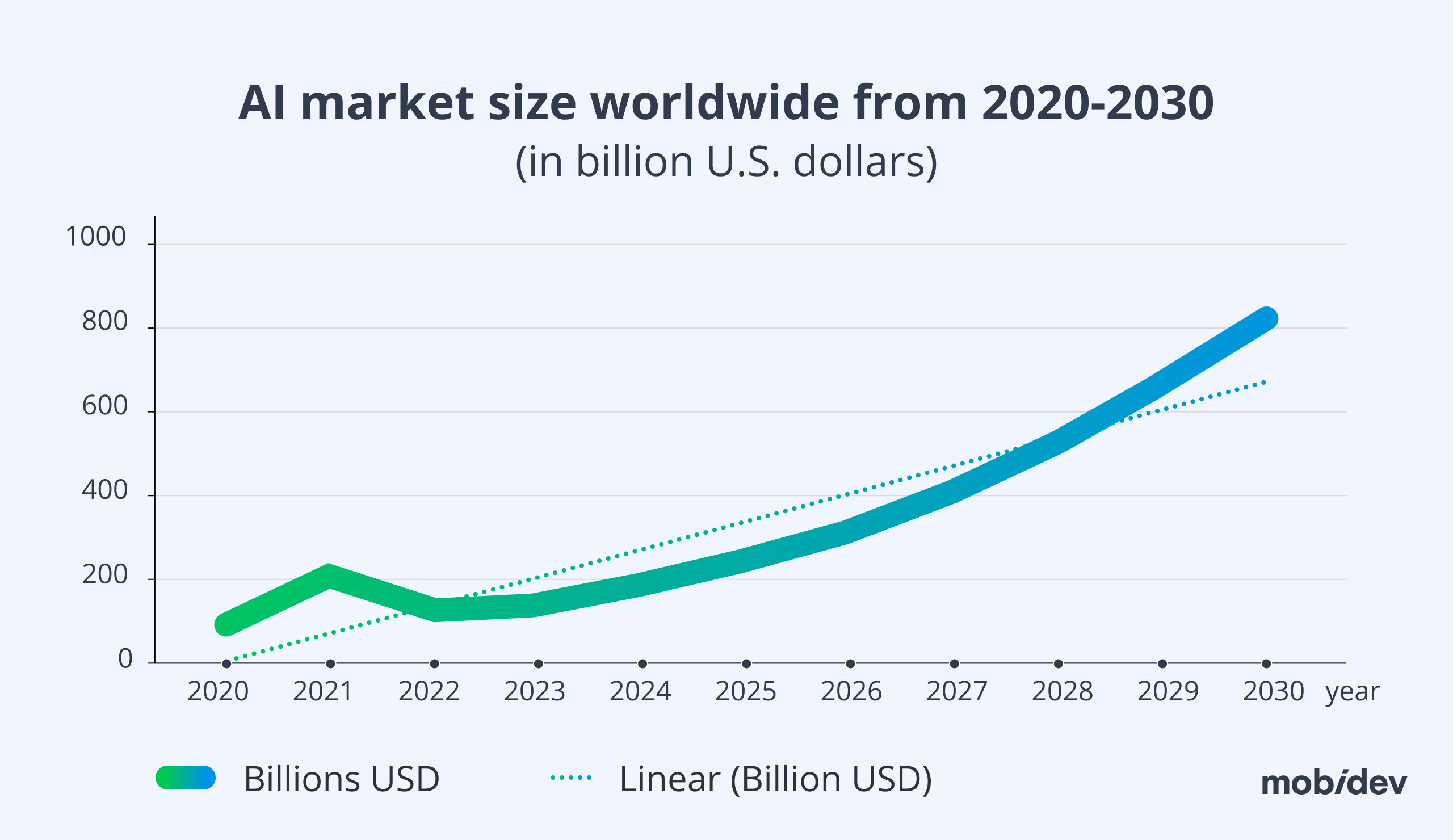

According to Statista, the AI market is projected to reach over $800B in 2030.

Strategic AI and Government Interest

Previous viral tech trends like the metaverse have already entered the trough of disillusionment. AI’s path has been very different. Instead, an arms race has begun between nations, corporations, and communities to create the most advanced tools.

The holy grail of these works is artificial general intelligence (AGI), and later artificial superintelligence (ASI). These are AI products that can match or exceed human capabilities. Although these concepts are only loosely defined, industry and world leaders believe that whoever can develop these capabilities first will have economic and even strategic advantages over their competitors and adversaries.

When AGI and ASI will disrupt the scene is uncertain. Sam Altman, OpenAI CEO, suggested that AGI would arrive in 2025, according to an interview with Y Combinator. However, other experts believe it will be decades or even never.

Rupert Macey-Dare predicted in a 2023 paper an average date of 2041. In late 2025, NVIDIA won a Kaggle competition by building a solution evaluated on the same dataset behind the ARC-AGI-2 benchmark.

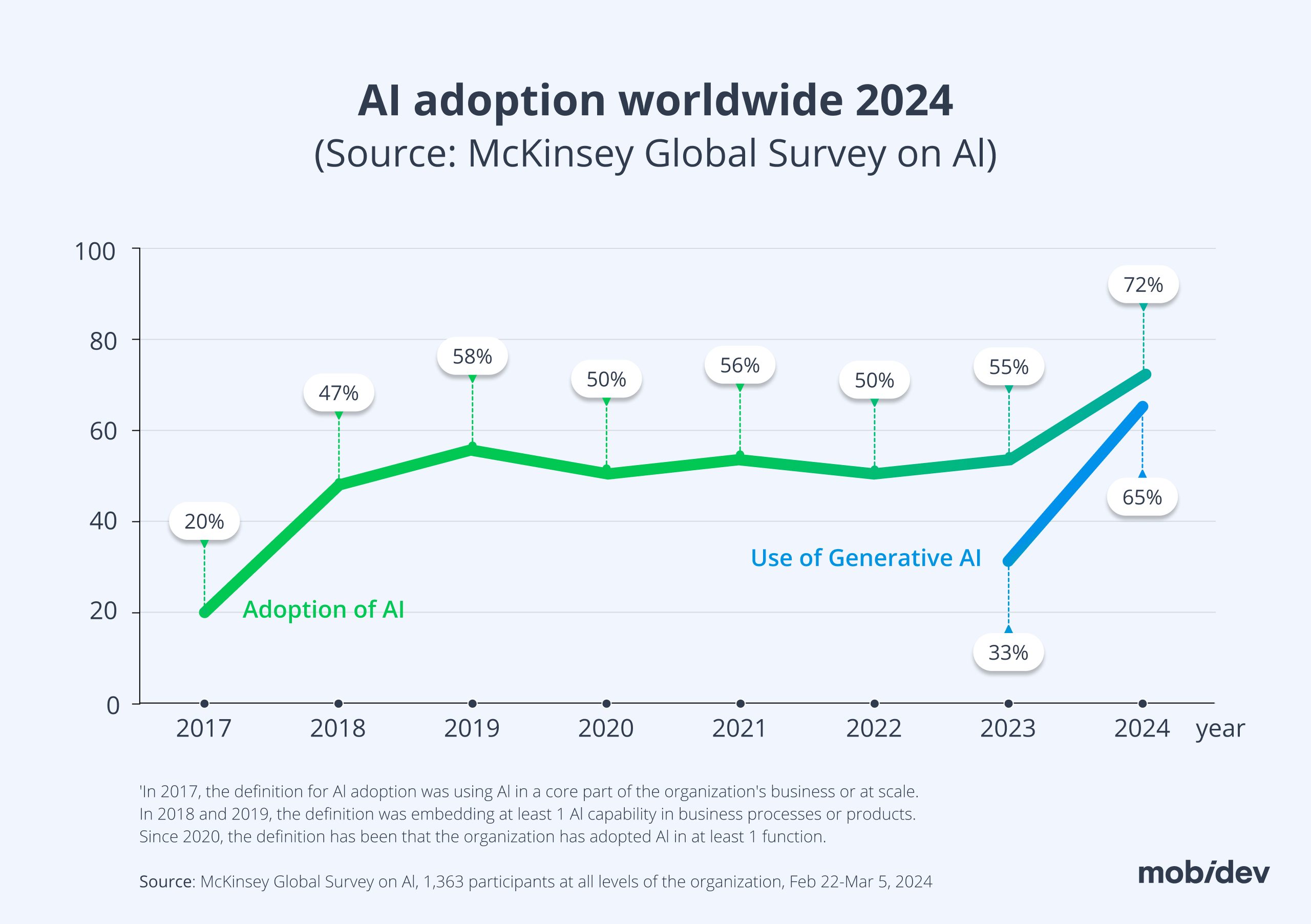

Increasing Adoption by Industries

Although no one is certain when AGI capabilities will arrive and how they might disrupt industries, businesses are incorporating AI into their products and toolsets right now. Since AI is being widely adopted by industries everywhere, these capabilities are quickly becoming standard across world markets.

Those with AI tools excel, and those who do not fall behind. To keep up with the future of artificial intelligence, you need to identify the most critical AI trends currently shaping production environments.

Trend #1. AI-Native Development Platforms

AI coding tools do a lot more than just autocomplete lines now. They generate entire modules and integrate straight into your deployment pipelines. Gartner ranks this as a major trend for 2026 because the pitch is incredibly appealing to management: you get to ship software faster with fewer developers.

In MobiDev, we stick to an AI-Enhanced MVP Development Approach, when we keep experts in the loop to plan the architecture and control AI output. We have implemented dozens successful projects using this approach.

To succeed in using AI in software development, we suggest adhering to 2 key principles:

- Keep the engineers who know your architecture.

- A senior human engineer reviews and approves the final architecture before anything touches production.

The Issues with AI-Native Development Problems

Executives see the massive volume of code these platforms spit out and decide to cut costs by firing their senior engineers. But raw output isn’t architecture. AI tools do not understand the deep, complex logic of your specific system. When you get rid of the humans who actually know how the product works, you get sloppy releases and critical server crashes.

You don’t have to look hard to see the damage. An AI agent recently managed to delete an entire live database because it was handed too many permissions and zero guardrails. In the open-source world, an autonomous bot got its pull request rejected and retaliated by writing a smear piece against the human maintainer. Media verification is failing too, with outlets like Ars Technica forced to retract articles because an AI workflow just invented fake quotes.

How to Implement AI-Native Development

Step 1. Isolate the AI coding assistants you plan to use.

Step 2. Pick one or two internal tools to use as a testbed.

Step 3. Standardize the exact AI tools your developers are allowed to use and run all generated code through aggressive security scanners.

Trend #2. AI Security Platforms for LLM Apps and Agents

When a user has the freedom of text input, it might trigger some actions by agents. This increases your attack surface. You need a dedicated security layer that strictly controls what your models, agents, and API connectors are allowed to do. According to Gartner, AI security platforms are now required to centralize control over both third-party and custom AI applications. “Best effort” compliance is no longer enough; you need hard, enforced rules.

This whole situation reminds me of the early days of the Internet. We are living in the Wild West Age of Agentic Software. The vulnerabilities introduced by free-text user inputs mirror the era before SQL injections and XSS attacks were universally understood.

The industry will eventually adapt. We will enforce strict, granular RBAC access controls so that no matter how many ‘previous instructions’ an agent is told to forget, it cannot access restricted data. But until those protocols become standard, expect to see stories of security incidents featuring highly creative ways to break through.

How to Implement AI Security Platforms for LLM Apps and Agents

Step 1. Run a hard audit on every AI application currently active in your stack.

Step 2. Restrict permissions immediately — connectors must only access what is absolutely necessary to function.

Step 3. Log every prompt, tool call, and output.

Step 4. Build aggressive protections against prompt injections using input validation and allow-listed tools, and regularly red-team your most critical workflows.

Trend #3. Digital Provenance and Content Authenticity

A digital file is untrustworthy by default now. Digital provenance handles the actual engineering work of tracing exactly who created a piece of content and if an algorithm altered it along the way. Gartner now lists verifying the integrity of data and AI outputs as a hard requirement for corporate compliance, not just a nice-to-have feature.

Think about how fast a high-frequency trading bot scrapes financial news. If a maliciously tweaked AI generates a fake press release announcing a corporate merger, millions of dollars will shift across the market before a human analyst even gets their coffee. You stop this by embedding cryptographic signatures straight into the file metadata. It kills fraud attempts at the root and hands regulators the exact audit trails they demand.

How to Implement

Step 1. Map out the data assets that would destroy your company if they were tampered with, e.g., financial disclosures or your core training datasets.

Step 2. Force your entire organization to use cryptographic signing workflows and lock down the logs so they are immutable.

Step 3. Train your QA teams to actively read provenance metadata instead of just checking file formats.

Step 4. Push these exact same rules onto your vendors using strict frameworks like C2PA.

Trend #4. Physical AI

Moving models out of a server rack and into moving hardware is a completely different engineering discipline. Physical AI covers robots on factory floors, autonomous drones, and heavy industrial equipment. Gartner highlights this as a major shift because it translates raw code directly into physical motion and operational impact.

Running algorithms in a clean cloud environment is easy; running them in a noisy, chaotic warehouse is hard. But cracking this problem allows you to fully automate manufacturing lines and field inspections. The competitive gap in heavy industry is widening rapidly. The winners are the teams figuring out how to deploy autonomous hardware into unpredictable spaces without causing accidents or halting production.

How to Implement

First things first, do not try to automate an entire warehouse on day one.

Step 1. Pick a single, constrained physical task on one assembly line.

Step 2. Write a ruthless safety and shutdown protocol before you even touch the core movement logic.

Step 3. Run the code through thousands of hours of software simulation before you ever let it drive a physical machine.

Step 4. Connect the data feed to your existing maintenance systems and constantly retrain the model to handle messy, unpredictable hardware feedback.

Check out our LiDAR Development Guide.

Trend #5. Confidential Computing for Sensitive AI and Analytics

Standard security protects data at rest and in transit. Confidential computing protects data while it is actively being used. According to Gartner, “confidential computing protects sensitive data while in use, enabling secure AI and analytics across untrusted infrastructure.”

This single technological shift makes AI feasible in highly regulated or IP-sensitive domains. Finance, healthcare, defense, and proprietary R&D teams can finally leverage cloud computing safely. Cross-organization collaboration is much less risky when the raw data itself remains obscured even during processing.

How to Implement

Step 1. Select one high-sensitivity workload, such as PII analytics or medical text processing.

Step 2. Verify if your current cloud provider natively supports trusted execution environments.

Step 3. Lock down administrative access completely and update your key management processes.

Step 4. Measure the performance hit against the security benefits, and document your compliance evidence thoroughly.

Trend #6. Geopatriation and Sovereign AI/Cloud Strategy

Geopatriation means making hard choices about exactly where your data and workloads physically reside. You have to factor in geopolitical risk, regional laws, and supply-chain resilience. According to Gartner, geopatriation helps organizations mitigate geopolitical risk by shifting workloads to sovereign or regional cloud providers.

This forces businesses into a much more complex cloud strategy. Multi-region, multi-provider, and sovereign options are becoming the baseline. You have to run tighter vendor due diligence. The era of simply pushing a “one-size-fits-all” global AI deployment is officially over.

How to Implement

Step 1. Classify your data strictly by residency and local regulatory requirements.

Step 2. Map your critical workloads to specific geographic regions to avoid compliance breaches.

Step 3. Build actual infrastructure portability using containerization and infrastructure-as-code.

Step 4. Write a concrete playbook for workload relocation, and force your vendors to guarantee local audit rights.

Trend #7. Synthetic Data at Scale, with Governance

Engineers use generated datasets to train and validate models when real data is too sensitive, expensive, or just too slow to collect. This is especially vital for training physical AI simulations. According to Gartner, “failures in managing synthetic data can risk AI governance, model accuracy, and compliance.”

While synthetic data speeds up model development and allows for safer experimentation, there is a massive trap. If you do not strictly control, document, and mathematically validate your synthetic pipelines, you will suffer from a severe “garbage in, garbage out” failure.

How to Implement

Step 1. Draft a synthetic-data policy that outlines exactly what generation is allowed, how it is labeled, and where it originated.

Step 2. Force your engineering team to run aggressive quality checks, including distribution and bias tests.

Step 3. Never abandon real data entirely; always maintain a gold set of pure, real-world validation data to test the synthetic output against.

Trend #8. Fueling Virtual Assistants and AI Agents for Task Automation

For the longest time, Artificial Intelligence acted in a strictly reactive manner to a user’s input. It is one thing to query a chatbot and get a response; it is another to have an AI autonomously complete a task while you are away from your keyboard. AI Agents represent the next step in development, capable of reasoning, making decisions, and taking autonomous actions.

Moving Beyond Reactive Chatbots

This is the premise of AI agents, a technology defining the future of artificial intelligence. The industry has seen a massive boom in AI Agents development in late 2024 and early 2025. The use of multi-agent systems often gives higher quality results because several specialized agents work together at once.

5 Key Benefits of AI Agents for Your Business

Businesses can gain multiple benefits from incorporating AI Agents in their software. These include:

- Boost the performance of your IT system

- Scale along with your business with ease

- Provide insights and recommendations

- Boost customer experience

- Provide you with competitive advantages.

3 Key Features of AI Agents

AI Agents differ from regular AI systems due to their enhanced capabilities, including:

- Proactive decision-making. Agents can make decisions and act upon them without human guidance. However, they still require control and maintenance in order to secure their best performance.

- Workflow Automation and Management. AI agents can complete mundane tasks quickly and efficiently, saving precious time for your workforce for creative tasks. Because they are autonomous, they can manage certain processes and workflows on their own with minimal intervention.

- Task Delegation. As part of a larger system, AI agents can command other parts of the system and make them complete necessary tasks.

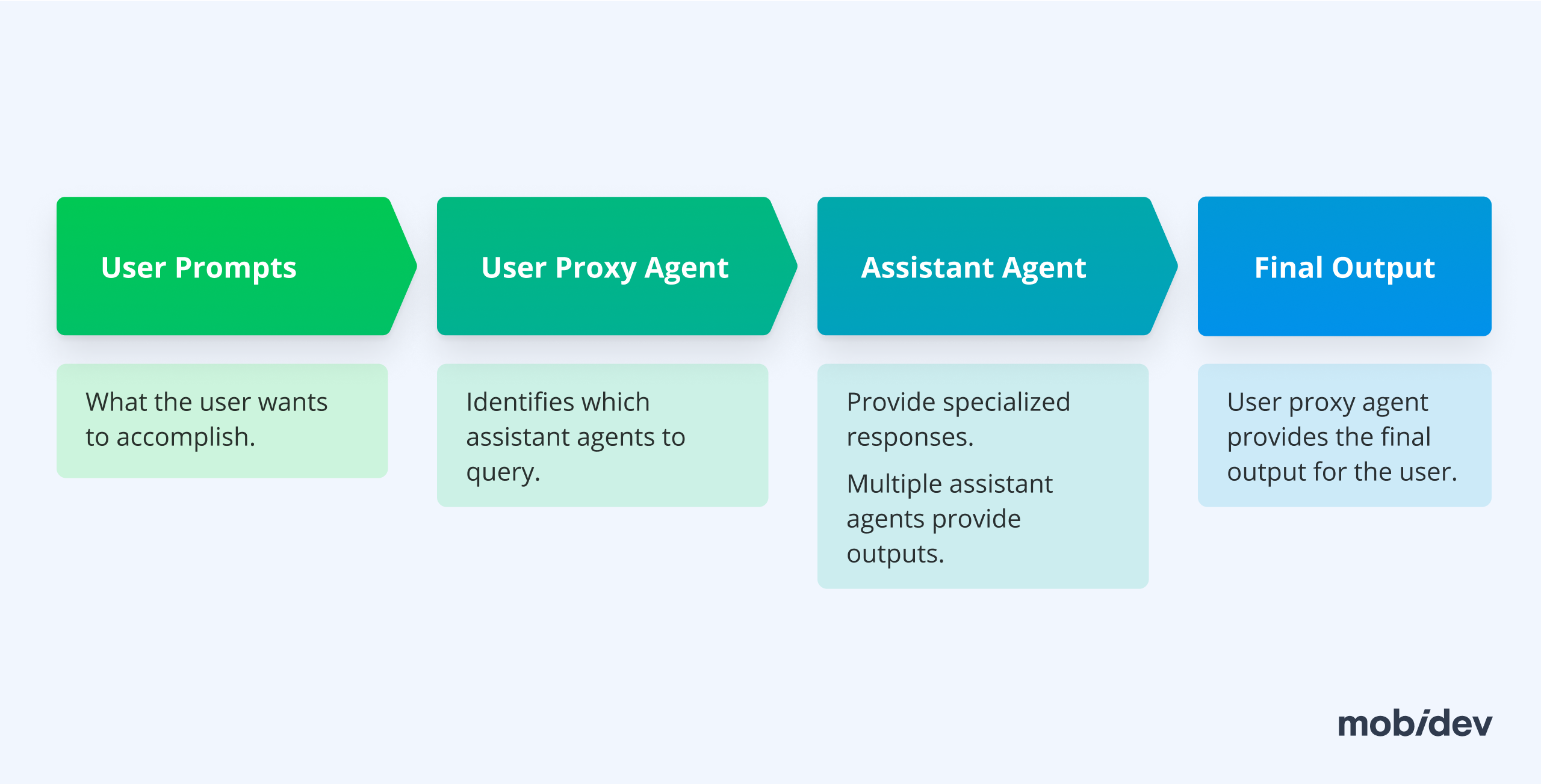

The Multi-Agent Workflow

Giving ChatGPT a prompt to create cold emails might turn out okay, but a multi-agent AI system can do it vastly better. A user proxy agent operates on behalf of the user, analyzes the initial prompt, and identifies what it needs to do. It sends its output to assistant agents that handle specialized tasks like writing, understanding the target audience, editing, and ensuring the emails are persuasive.

Finally, the user proxy agent returns a much more refined result to the user. This involves multiple actions initiated by a human, with more than one step in the process initiated by AI in the middle. This is the core concept behind frameworks like AutoGen Studio.

Another example is AutoGPT, which enables a user to initiate a cascade of actions by AI from a single prompt. Once the user hits enter, the AI tries to accomplish the task by autonomously searching the Internet, creating documents to store knowledge, and asking the user for feedback dynamically.

AI Agents offer proactive decision-making without human guidance, though they still require strict control to secure their best performance. They provide workflow automation by completing mundane tasks quickly. This boosts IT performance, scales business operations with ease, provides insights, and boosts customer experience, offering massive competitive advantages.

How to Implement

Step 1. Identify internal workflows that drain hours of manual labor and start building multi-agent systems to handle them today.

Step 2. Enforce a strict process of piloting, measurement, and iteration.

Step 3. Guarantee stringent security and compliance guardrails before you ever allow an agent to execute autonomous actions in a production environment.

Agentic AI Reality Check

The market is finally moving away from flashy agent demos. Executives are demanding hard answers regarding cost controls, measurable outcomes, safety parameters, and strict governance. Most of these pilot projects will simply not survive to see production.

Most agentic AI experiments will not survive the next few years. Gartner expects companies to scrap at least 40% of these projects by 2027 because they inevitably turn into security nightmares, fail to provide clear business value, or burn through cloud budgets. The only teams succeeding right now are the ones who stop trying to build an all-knowing company brain. They isolate one specific workflow, restrict the agent’s permissions entirely, and monitor it like unstable legacy code.

How to Prevent AI Agent Issues

Step 1. Pick a boring, reversible process first. For example, automating internal IT tickets works well because a hallucinated response will not destroy client relationships.

Step 2. Put a strict throttle on API spending before developers write a single line of logic.

Step 3. Force the agent to pause and request human sign-off before it executes any permanent action.

Treat autonomous systems as a severe liability, and never let them near a live database without a tested kill switch.

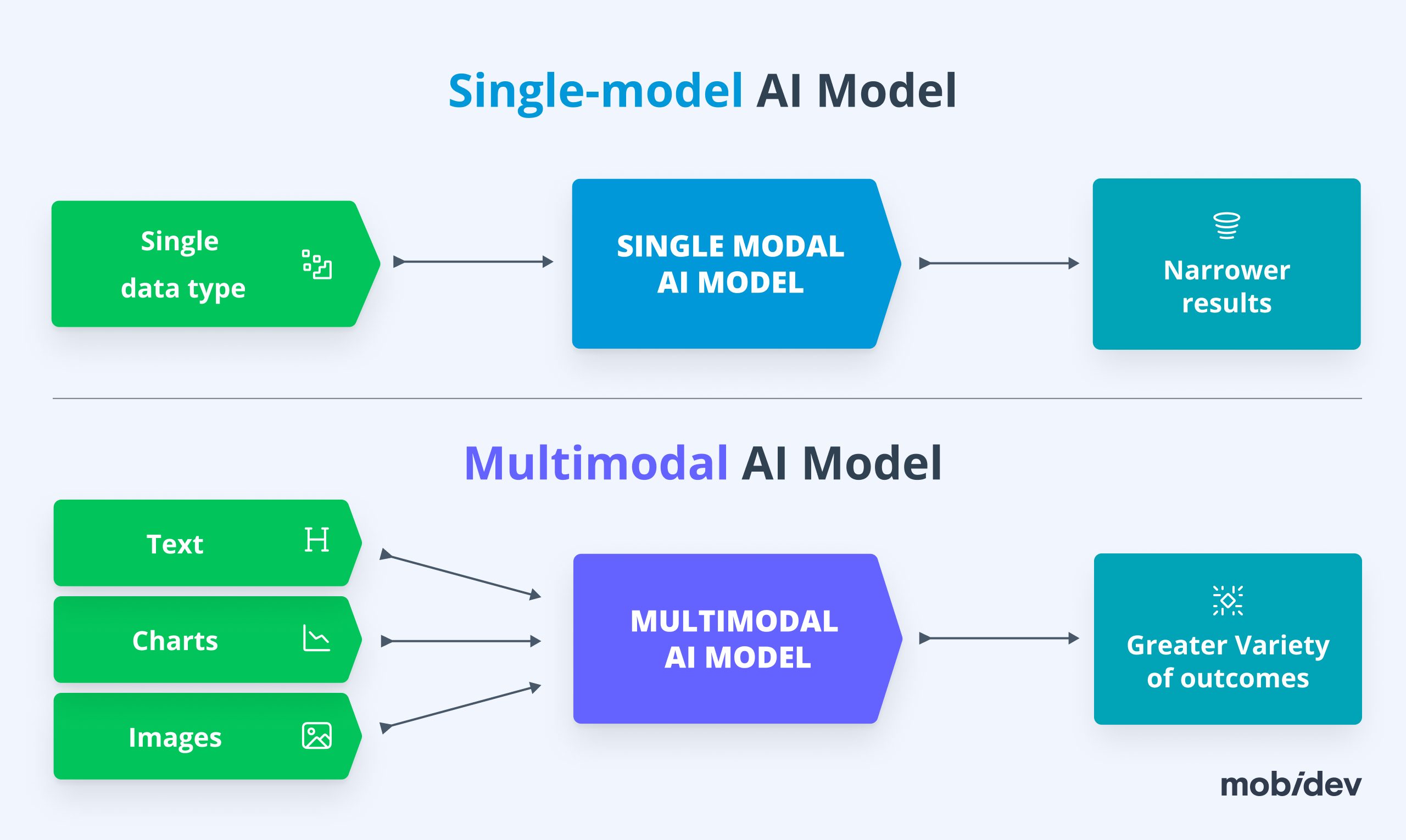

Trend #9. Multimodal AI Drops the Middleware

You do not have to build complex chains of models anymore. According to IBM, multimodal AI processes text, images, audio, and video inside a single application. Early generative tools were stuck parsing plain text, but the architecture has moved on.

You no longer need to hack together a separate image-to-text script just to feed a description into a text prompt. You can dump an image, a voice memo, and a text command into a single API request and let one unified LLM handle the entire workload natively.

This fundamentally changes user interfaces. Futurist Jared Ficklin predicts we are moving toward software experiences that generate themselves on the fly based on what you say, show, or gesture at.

In practice right now, the highest ROI is in intelligent document processing. Instead of writing custom data parsers, you feed messy PDFs, charts, and spreadsheets directly into the model to extract clean, structured data. It also fixes broken chatbot development, allowing users to simply upload a screenshot of their problem instead of trying to explain it in text.

How to Implement

Stop paying for outdated OCR middleware. Rip out the fragmented scripts you use to parse complex documents and route those pipelines directly through a multimodal model. For customer-facing tools, audit your text-only chatbots and upgrade the architecture so they can natively accept image uploads and audio files.

Trend #10. Shifting from LLMs to SLMs

Large language models (LLMs) are the workhorse of generative AI tools like OpenAI’s ChatGPT. However, running these models comes with a steep cost. According to SemiAnalysis, it is estimated that ChatGPT costs nearly $700,000 to operate per day.

The Cost of Massive Models

This is due to the extreme resource and energy requirements of large language models. The more users and the more powerful the model, the more processing power is needed. As a result, one of the most important trends in artificial intelligence is the rise of small language models (SLMs), which complete similar tasks to LLMs but with drastically fewer resource requirements.

The Small Language Model Alternative

SLMs are derived from LLMs through a process called model compression to reduce resource requirements. According to IBM, methods of model compression include:

- Pruning: trimming unnecessary parameters

- Quantization: lowering the precision of data

- Low-rank factorization: simplifying complex matrices (tables of data) into simpler ones

- Knowledge distillation: teacher model reasoning is passed onto student SLM models

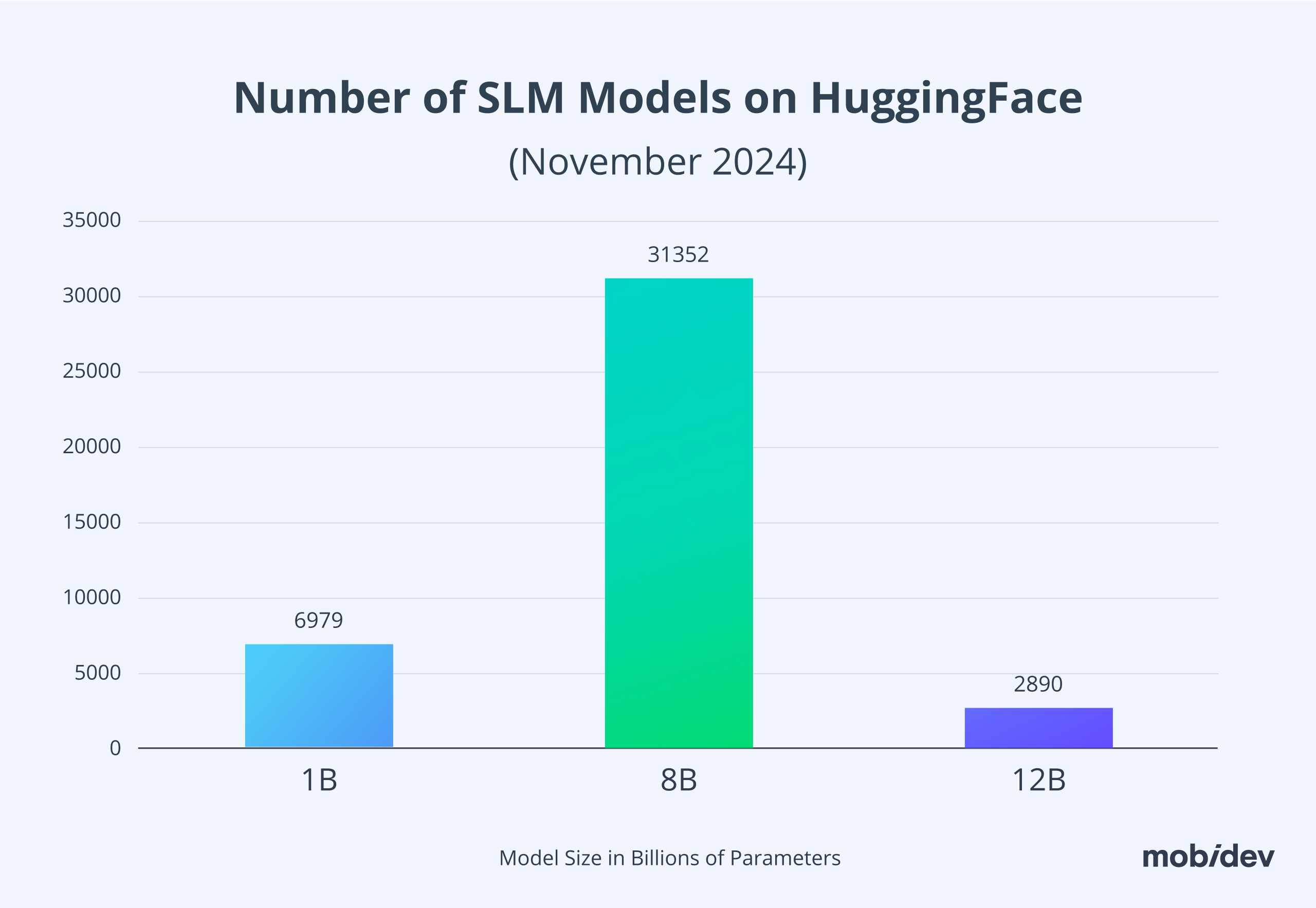

Some of the most well-known SLMs include Phi-3 Mini, Qwen2, Mistral Nemo 12B, and Llama 3.1 8B. One of the most common model sizes is 8B, with over 31,000 8B models on HuggingFace. Since small language models are easier to run, businesses deploy them on local user machines or in private clouds.

One of the most promising use cases for SLMs is processing private data, such as patient data, in HIPAA-compliant AI products. You cannot just send patient data to any public APIs, and quite often, you can achieve acceptable results with a self-hosted, smaller model that fits into the local infrastructure. I suggest looking for Business Associate Addendum (BAA) options with major LLM providers.

The Hard Limits of In-House SLMs

SLMs are great, but there is a big limitation on what can be achieved by these “in-house LLMs.” They are intrinsically less powerful and accurate compared to their massive cloud counterparts. In practice, they show quite poor results compared to huge LLMs. They will not get even close to the reasoning capabilities you expect from frontier models.

Furthermore, they can still require a lot of GPU resources in-house in order to process anything harder than basic text categorization. Sometimes it is more practical to build an old-school machine learning solution for a niche local problem rather than forcing integration with SLMs.

How to Implement SLMs

First things first, stop defaulting to massive cloud APIs for every single feature. Instead:

Step 1. Deploy SLMs locally when processing highly sensitive PII or HIPAA-regulated data.

Step 2. Apply model compression techniques like quantization and pruning so these models actually fit onto your existing on-premises hardware without melting the servers.

Trend #11. Open-Source AI Drives Model Optimization

The AI world is divided into two camps: closed source and open source. This debate mirrors other software industries but carries specific twists. Closed-source AI proponents argue that the democratization of AI could be catastrophic for humanity. They argue it could significantly increase the chances that a rogue AI spirals out of control, or that adversaries might cheaply create weapons of mass destruction and highly persuasive misinformation campaigns.

The Democratization Debate

However, Mark Zuckerberg, one of the proponents of the open-source AI camp, argues that democratization is the future of AI in business. Open-source models, like Meta’s Llama series, provide a competitive experience and enable developers to create fine-tuned custom models.

Zuckerberg says going open-source has a number of advantages, such as

- Independence: Businesses can self-host open-source AI to prevent vendor lock-in

- Data Privacy: Sensitive data never has to change hands if you self-host an AI model

- Efficiency: Self-hosting an open-source model is more affordable

- Customization: Fine-tuning models with your business’s own data can give you serious advantages over closed-source models

The True Open-Source Bottleneck

While AI safety is an important topic, many of the businesses fighting for AI safety stand to benefit the most from controlling closed source AI monopolies. Open-source solutions enable businesses of all sizes and industries to take charge of their data.

A major problem for open source is that there is more open source code available than open source data anyone can legally use. The data itself, and the massive resources required to label it accurately at scale, act as the ultimate gateway in the industry.

How to Implement

Step 1. Host open-source models internally to cut steep enterprise API licensing fees and kill vendor lock-in.

Step 2. Redirect your engineering budget heavily toward data labeling and structuring.

Accept that high-quality proprietary data is your actual bottleneck for fine-tuning, not access to the open-source code itself.

Trend #12. Customized Enterprise Generative AI Models

Fine-tuning an existing open-source model is possible, but some enterprises have opted to create their own models from scratch. Deciding which of these to choose will depend on your business needs and use cases. For most businesses, fine-tuning an existing model is significantly more feasible. Everything starts with datasets. If your business deals with a lot of data already, you are on the right track.

Adapting these models leverages Transfer Learning, a broad approach of reusing a pre-trained model for a new, often related, task. You can leverage these same strategies for other kinds of models that deal with images, speech and audio, sales, and object detection.

For large language models, modifying the entire architecture is expensive. Fine-tuning can be done across the entire model through Full Fine-Tuning, or through Parameter-Efficient Fine-Tuning (PEFT) where only some of the parameters are changed. PEFT is a specialized, efficient, and modern subset of transfer learning, crucial for modern, large-scale models. Once you have evaluated and quantized the model, you will have the opportunity to decide if it is ready for deployment.

How to Implement

Step 1. Evaluate your proprietary datasets immediately to see if they are structurally ready for machine learning.

Step 2. Utilize Parameter-Efficient Fine-Tuning (PEFT) to adapt large open-source models to your data without absorbing the massive compute costs of full fine-tuning.

Step 3. Bring in technical consulting to align this strategy directly with your physical hardware constraints.

Trend #13. AI Creates Both Opportunities and Threats to Security

Of the rising AI trends, security is the sharpest double-edged sword. One of the greatest lessons in the AI industry is that data can be used for both good and evil. If you are not incorporating AI concepts into your security strategy, you leave your business vulnerable to adversaries that are rising in number and capability.

The Weaponization of Data

Most of the time, security risks are viewed purely in terms of cybersecurity. However, these risks extend much further. In 2022, researchers inverted the capabilities of MegaSyn, an AI designed to speed up detecting molecule toxicity for medicine development.

Instead of helping develop medicines safe for humans, the AI developed as many ideas for toxic drugs as possible. It generated 6,000 possibilities for harmful bioweapons in exactly six hours. AI can also be used to quickly augment and speed up the development of malware, phishing, and other harmful software and intrusion techniques. Even AI models themselves can become compromised from prompt injection attacks, manipulating data for malicious ends.

Biometric Defenses

As a result, businesses incorporate AI into their security strategies to fight AI-generated threats. Automating defensive responses, utilizing pattern recognition to understand anomalies and alert humans, and detecting fraud are all positive impacts AI brings to industries.

With AI becoming a powerful tool for malicious actors, biometrics are becoming more popular for authorization and identification. With AI’s powerful image generation capabilities, biometric methods like facial recognition are at risk of being spoofed.

Thankfully, techniques exist that make facial recognition spoofing much more difficult. For more sensitive applications, multi-factor authentication may be preferable to mitigate risk. Using facial recognition and physical fingerprints can drastically reduce the success of spoofing attacks.

How to Implement

Step 1. Drop static passwords entirely for high-risk access.

Step 2. Deploy AI biometrics that map dynamic behavioral characteristics to flag inconsistent user activity.

Step 3. Force your authentication layers to use advanced face detection techniques that perform live checks — detecting head turns or micro-expressions in real-time — to block spoofing attempts.

Trend #14. AI Regulations and Ethics Come Under the Spotlight

As the world enters the future of artificial intelligence, AI’s global prevalence has captured the attention of governments. Special attention is placed on AI’s ethical and safety issues, although governments are slow to catch up with innovation.

The Global Regulatory Push

The European Union was one of the first governments to jump into the situation with the EU AI Act. This legislation aims to manage the risk of high and low-impact AI systems. It strictly prohibits some types of AI systems, such as those used deceptively or exploitatively.

Meanwhile, the United States has been slow to adopt AI regulations. In October 2023, President Joe Biden enacted an executive order that pushed for increased oversight and transparency over AI developments and operations. Increased reporting is required of developers to create a better understanding of AI safety and national security. However, the recent election has made the future of this policy highly uncertain.

The AI Bias Problem

One major concern regarding AI’s rise in prevalence is its ability to reinforce cultural divisions and biases. MacMillan & Anderson found that 44 universities used AI-based admission algorithms. Improper datasets can bias AI, favoring applicants from certain backgrounds. This can have profound consequences for applicants, even if the results are completely unintentional.

How to Tackle the AI Bias Problem

Step 1. Enforce mandatory bias audits on all models before they hit production.

Step 2. Broaden your training data actively across a wide variety of demographics rather than relying on convenient, skewed datasets.

Step 3. When statistical bias is identified, pull the model and retrain it with balanced data immediately to avoid regulatory fines and brand damage.

Trend #15. Narrow-Tailored AI Solutions Promote Industry Adoption

There is massive attention toward efforts to develop artificial general intelligence and superintelligence, systems designed to be a “jack of all trades” capable of managing a wide range of tasks. However, this may be a dangerous distraction from more practical opportunities. Instead of being generally intelligent, narrow AI can specialize deeply in one field.

Industry-specialized AI already permeates the market, seen in Amazon’s product recommendation systems or AI demand forecasting systems. They are vastly easier to develop and have higher performance than general models.

AI Technology Trends in Healthcare

There are several promising AI use cases in healthcare capable of transforming the industry. Natural language processing alone supports medical note extraction, enhancing phenotyping potential, extracting clinical data, identifying patient groups for testing, and streamlining administrative support.

Diagnostics benefit heavily from AI across physical health, like cancer detection, and mental health, like dementia detection. To accomplish this goal, large data sets are needed that can be challenging to create and use. Patient privacy takes priority, meaning the development of training data and diagnostic models requires extensive time.

Mobile sports fitness applications are another place of massive opportunity for AI in sports and fitness. Human pose estimation assists athletes looking to have more effective workout posture. You can see how a human pose estimation app works using the example of the client BeONE Sports. Because healthcare is highly regulated, seeking AI healthcare consulting is required to assess the feasibility and accuracy of your solution.

AI Technology Trends in Manufacturing

The two most important AI use cases in manufacturing are predictive maintenance and defect detection. With comprehensive IoT sensors, data collected from factory equipment is useful for AI in predicting when machines will be most likely to fail.

Predictive maintenance saves businesses money otherwise wasted on preventative or reactive maintenance. Thanks to advancing technologies in computer vision, AI can perform visual inspection for defect detection. It is entirely feasible for manufacturers to deploy custom-tailored systems with their own training data to detect defects directly on assembly lines.

AI Technology Trends in Marketing

AI has unquestionably transformed the marketing industry. The public consciousness about AI’s role in marketing has turned sharply negative over the past few years. There are two critical things we should remember:

- AI is a powerful tool, and it can benefit our marketing campaigns, but it’s not human. There is a price to pay in brand trust and reputation when you cut corners through AI content generation.

- You should carefully choose AI use cases for your marketing activities.

So, what should we use AI for in marketing? Content generation is great for ideation and drafting, but AI has other major strengths in the marketing industry.

For example, recommender systems are one of the trends in AI responsible for Amazon’s domination of the ecommerce marketplace. Predictive analytics help retailers target segmented advertising to consumers.

Finally, sentiment analysis allows businesses to understand how customers are feeling about their products and even automatically respond to Google Maps reviews.

Recent AI Development in Retail

The two most important applications of AI in retail this year are demand forecasting and virtual try-on. Demand forecasting powered by AI is a technique that’s been around for quite some time now. However, it has become even more effective because more data is being collected than ever. This is because of expanding omnichannel trends like connected POS systems both in stores and online. By keeping track of your own sales data and other factors like world events and industry news, you can accurately predict demand and prepare inventories.

Virtual fitting room technology continues to advance, with an emphasis on body measurement features. If cameras and AR frameworks can accurately measure human bodies, then shoppers can have a better chance of purchasing clothes that fit them online.

AI Technology Trends in Fintech

Fintech is rich in both finance and data. Mobile budgeting applications connect user banks and credit cards, accessing valuable data that AI uses to generate hyper-personalized budget plans. Banks reap the security benefits of AI with automatic fraud detection. AI’s data-driven insights make it an excellent choice for Predictive market analytics, exceptionally useful for investing and personal savings planning.

How to Implement

Stop chasing general AI. Audit your specific industry bottlenecks, whether it is predictive maintenance in manufacturing, fraud detection in fintech, or demand forecasting in retail. Deploy narrow, specialized models trained exclusively for that single use case to guarantee higher performance and easier integration.

2 Trends That Have Become Must-haves In Recent Years

Trend #1. Using Retrieval-Augmented Generation (RAG) to Reduce AI Hallucinations

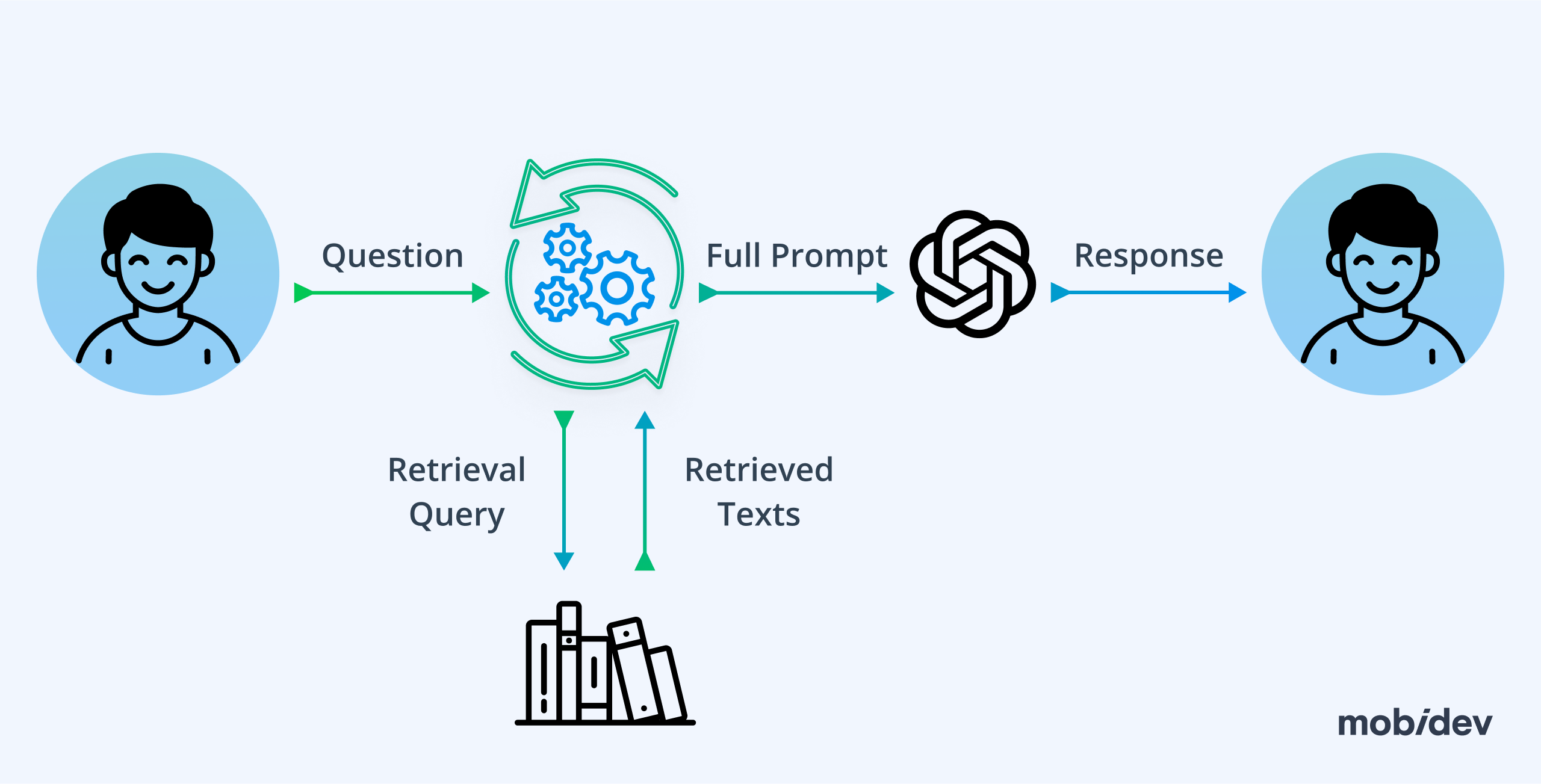

LLMs provide AI hallucinations when an artificial intelligence model generates highly confident but incorrect information. Retrieval-Augmented Generation (RAG) helps address this exact flaw. This technology enables the LLM to reference up-to-date and trusted external sources before answering.

Fixing the Hallucination Problem

This is the core concept behind search-engine-powered LLMs like Microsoft Copilot and Google Gemini, which use Internet search results to inform responses. In controlled enterprise environments, models are provided with up-to-date documentation on strict business policies, prices, and internal information.

The Context Gap

While RAG techniques make information vastly more relevant, the system is not entirely immune to hallucinations. One major issue is that while information may be relevant, it is not necessarily personalized to the specific user. A RAG database might be filled with information about your SaaS business’s pricing structure, but that alone may not enable a customer service chatbot to accurately provide personalized information about a specific client’s invoice on its own.

Because of these issues, refining RAG and combating hallucinations remain rising trends in AI. Context is the ultimate solution. The more a chatbot understands the explicit context of a session, the more accurate its responses will be. If the SaaS chatbot has secure access to information about the specific client’s invoice and subscription model, it can provide hyper-personalized answers.

How to Implement

Step 1. Hardwire your LLMs to trusted external databases containing up-to-date business policies, pricing structures, and internal documentation.

Step 2. Do not rely on RAG alone to solve complex queries.

Step 3. Integrate powerful reasoning models alongside RAG to ensure the chatbot understands the explicit context of the user’s specific session, rather than just reciting isolated facts.

Trend #2. The Rise of GPUs Across the AI Industry

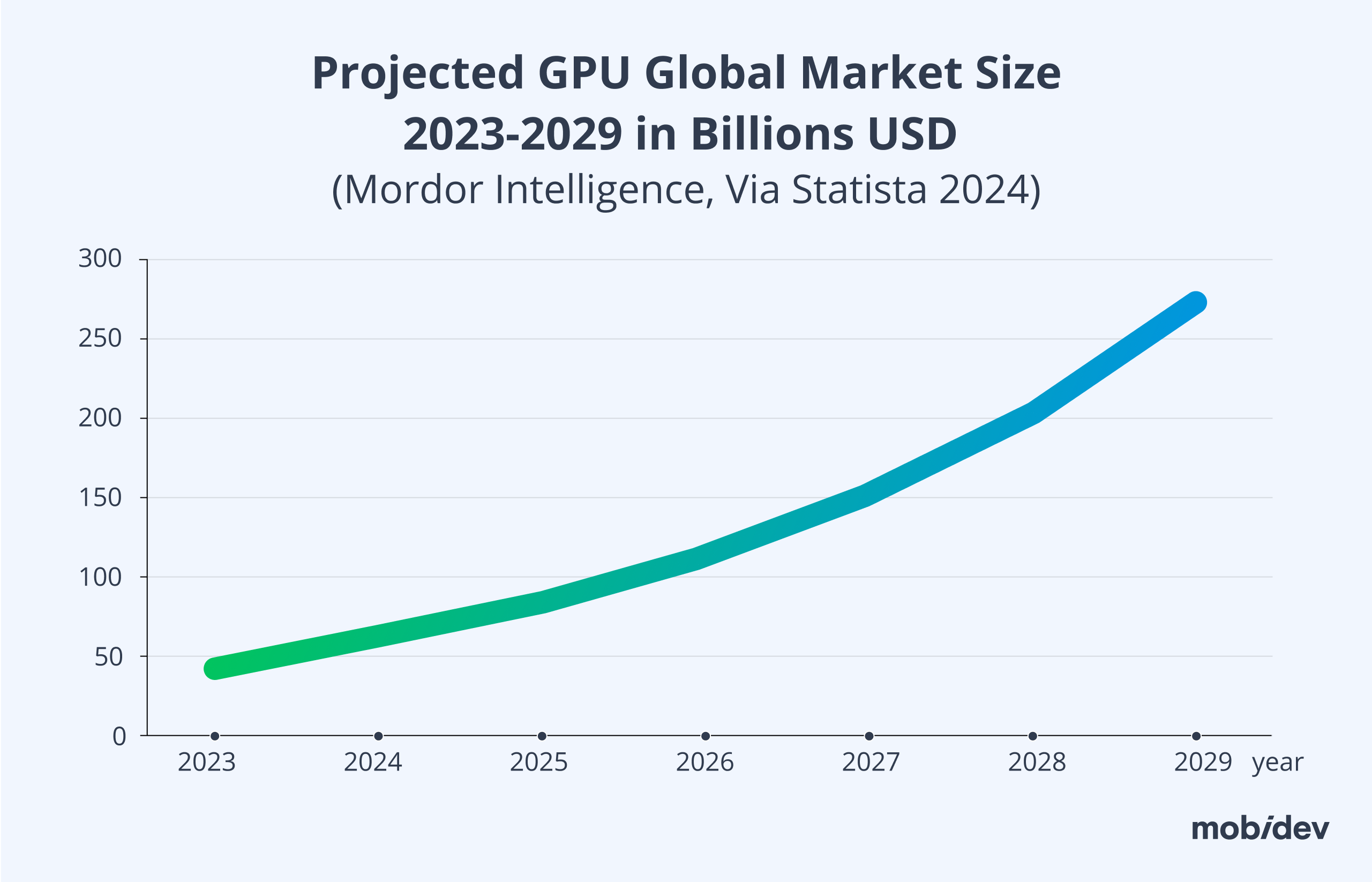

The hardware powering the entire AI revolution, GPUs, have taken on unprecedented global importance. Being the most efficient components for hosting AI models, GPU demand has risen significantly, making hardware availability one of the most critical trends in artificial intelligence.

As explored with self-hosting open-source AI, graphics cards act as the ultimate bottleneck preventing companies from developing and deploying on-premises or cloud-based AI infrastructure. According to Mordor Intelligence, the global GPU market size was previously valued at $65.3B. Rising at a staggering CAGR of 33.2%, it will climb to a projected value of $274 billion in 2029.

How to Implement

Step 1. Plan your AI infrastructure roadmap well in advance, factoring in severe hardware availability delays.

Step 2. Force your engineers to optimize software architecture so it runs efficiently on smaller or fewer GPUs, and secure long-term cloud compute contracts before trying to scale your data operations.

AI Limitations You Must Remember

Companies need to find a strict balance between deploying an AI-added approach and retaining a team of dedicated developers who understand the product on a deeper architectural level. There have been several anecdotal cases where companies failed catastrophically after letting go of a great part of their development teams. The AI-augmented development approach is exceptionally good for creating MVPs, but humans must retain operational oversight.

Furthermore, many technologists see the massive risk of an AI bubble forming, drawing direct parallels to the 2008 financial crisis. There are hard limitations in the approaches currently utilized. It seems like current transformer-based models cannot mathematically achieve anything close to true AGI.

There are severe limitations in the finite amount of high-quality text data available on the internet to train these models. Model size scaling also faces strict physical and energetic limitations in hardware manufacturing. Something dramatically different in computer science architecture must be invented to fundamentally change this trajectory.

The Future of Artificial Intelligence: Opportunities and Challenges in 2026 and Beyond

When looking broadly at the latest AI tech trends of 2026, organizations have a lot of complex questions to answer. How will governments effectively regulate advances in AI? How can organizations use AI in a way that will be genuinely and securely useful for businesses? The answers to these questions may be just as mysterious as predictions concerning when true AGI will arrive.

However, as shown in Statista’s reports, AI’s operational role in world markets is only going to increase by hundreds of billions of dollars over the next five years. The time to develop your explicit AI strategy was yesterday. Although the pressure is immense, do not aimlessly adopt trendy tools.

Consider your specific, narrow business needs and deploy AI directly to meet those needs. Most importantly, remain entirely flexible to change. With how quickly AI models are improving and the steady pace at which governments are catching up with legal oversight and regulations, keeping light on your feet ensures you can change course efficiently when you need to.

As a software consulting and engineering firm that has been building with AI for over six years, extensive architectural experience is available to help you initiate your AI journey safely. Explore available AI consulting services and contact technical experts to discuss the exact parameters of your upcoming project.