Contents:

The virtual fitting experience is gradually becoming the new norm for those who buy online. Brands such as Amazon, Alibaba, Kering, and Nike invest in virtual fitting rooms to increase conversion and reduce return rates. Although ready-made solutions are readily accessible, many retailers prefer to invest in custom development to craft immersive experiences that are specifically tailored to their brand and product lines.

In this article, we’ll explore how virtual fitting room technology works, the different approaches to developing virtual fitting room solutions, and the best practices for successful implementation.

What is Virtual Fitting Room Technology, and How Does it Work?

According to studies, 71% of consumers are more likely to return products purchased online. Virtual fitting room technology plays a great role in counteracting the effects of high ecommerce return rates by allowing customers to try on clothing digitally, without the need for physical fitting rooms.

By overlapping a live video feed of the shopper with an item, the customer can check the size and fit before buying. This can be done with a smartphone, tablet, or PC webcam.

There are three basic components of any virtual fitting room application, aside from the UI:

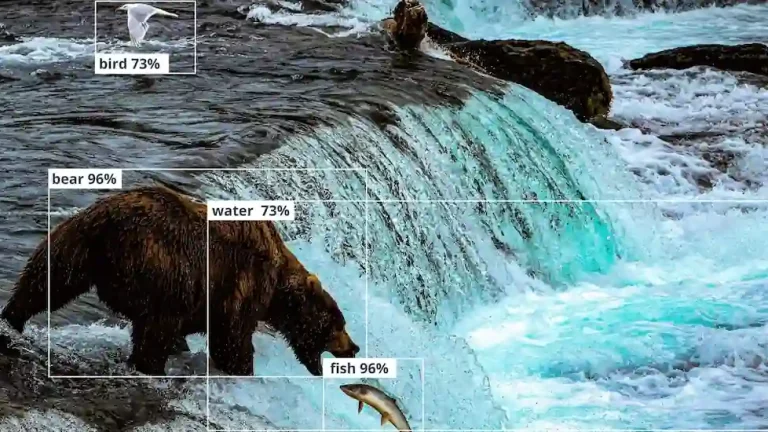

- Computer vision: software must be able to see and understand the scene, whether it’s a face or some other part of the shopper’s body.

- Augmented reality: virtual objects need to be rendered on top of the scene and tracked onto the appropriate part of the shopper’s body.

- Artificial intelligence: AI can improve the efficiency of scene understanding, object recognition, and visual quality for AR applications.

Limitations of AR Libraries

Every AR project has limitations that need to be considered. While working with ARKit, our team discovered that rendering limitations exist. This is because the framework prioritizes inference processes over tracking accuracy. This creates a steep challenge for apps that require real-time performance, as they may not be able to achieve the level of performance needed.

Another challenge we faced was the limited ability of ARKit’s algorithms to accurately identify specific body parts. The primary purpose of these algorithms is to recognize and track the entire human body. However, when processing images that only capture a portion of the body (e.g., just a hand or foot), they struggle to detect key points or landmarks.

Types of Virtual Dressing Room Technology Solutions

There are a few different approaches to virtual fitting room applications. Let’s explore a few popular methods.

Online Virtual Fitting Room Applications

This is what most people have come to expect from a virtual fitting room solution. Using a mobile device’s camera, you can display 3D products overlaid on top of the image and tracked to the shopper’s body. This helps the customer visualize how the product would look on their body.

There’s another way to use this technology to achieve that objective — creating digital human models. Facial recognition and body scanning technologies typically used in augmented reality can be used to create an accurate model of a shopper’s body. The user might use their smartphone as the sensor that can provide the data for the software to generate the model. Then, they can make manual adjustments if necessary to better match their body using the app. After that, they can see examples of apparel on their model with the correct sizing information.

One advantage of this strategy is that clothing doesn’t need to be rendered on the real user; instead, the try-on experience occurs on a predefined model displayed on your screen. However, you still need a reliable method for scanning the buyer’s body. This can be challenging if multiple images are needed, and it often requires manual adjustments.

Wardrobe Assistants and Outfit Planners

Another idea for using virtual dressing room technology is relatively simple, as it allows you to utilize clothes users already own. First, pictures of the user’s wardrobe are taken and then automatically classified. Then, the application can organize the wardrobe using a variety of filters, which might include seasons, occasions, colors, and brands. Recommendations based on these filters can play a big role in AI-generated outfit plans.

More importantly, these recommendation engines can be integrated into omnichannel retail experiences. Once you know what clothes a shopper has in their wardrobe, you can offer tailored suggestions for what might go well with clothes they already own. You can also combine the outfit planning method with online virtual fitting room applications, showing the customer how their outfit could benefit from your recommendations.

In-Store Virtual Dressing Rooms

With smart mirror displays, you can provide a similar or even more advanced experience in-store that customers would have at home with their smartphones. It’s no secret that fitting rooms are a contributing vector toward inventory shrinkage. Fitting rooms can also be difficult to maintain at all operating hours depending on staff availability. Additionally, not all items in stock at a store will be in a customer’s size. Because of all these factors, smart mirrors can make it possible for customers to have more chances to try on items that they might not otherwise be able to. This also reduces the resource demands of the store.

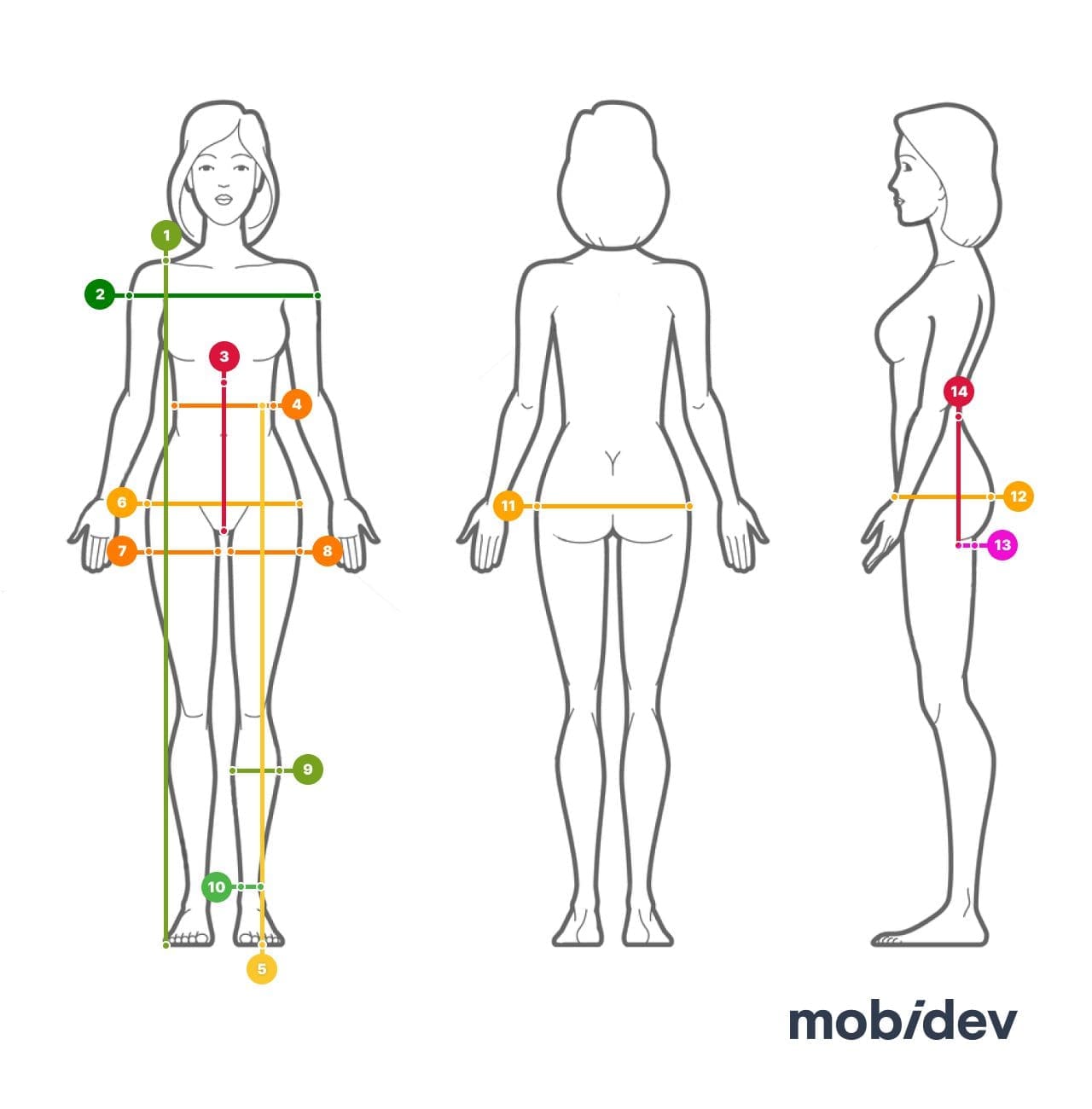

Body Sizing Solutions

To address the frustration of finding the perfect fit, modern clothing brands have adopted digital solutions that utilize AR frameworks and AI to provide personalized size recommendations. These tools use AR and AI to analyze each customer’s unique body shape and preferences by capturing precise measurements through body scanning technologies. The primary goal of these innovative solutions is to simplify the sizing process and empower customers with an efficient way to find the right fit for their bodies.

For instance, AI-based size recommendation solutions often use customer-submitted images or real-time scanning through smartphone cameras to calculate their measurements. This process involves analyzing body shapes, by using segmentation and key points detection to achieve higher accuracy.

Custom vs Ready-Made Virtual Fitting Room Apps

At first glance, it may seem that the fastest to market method of executing a virtual fitting room application is a ready-made app. However, these typically involve digital mannequins rather than full-fledged AR experiences. Ready-made solutions that offer AR features are fewer and farther between, and their quality leaves a lot to be desired. Consequently, this can result in poor customer experiences.

The other option is custom development that integrates deeply with your brand and product line, tailoring experiences for specific-use cases that your customers will value. This also helps differentiate your brand in the market. Such an investment can be much more profitable in the long run, even though such projects are complex and require special expertise.

Here are the key benefits of a custom virtual fitting room platform:

- Tailored Experience: Custom virtual fitting room experiences can be designed to meet the unique requirements of a brand or product line, ensuring a better fit for the target audience. Adopting a custom solution also allows for greater alignment with brand aesthetics and messaging, enhancing overall user experiences.

- Flexibility and Scalability: Unlike ready-made solutions, a custom virtual try-on application can evolve with your business needs, adding features and integrations as needed. This can help with scalability as well; as your user base grows, custom solutions can better adapt to increased demand without compromising performance.

- Data Control: You can get far more control over your data with a custom solution than you can with a ready-made application. This helps you ensure compliance with privacy regulations and enables tailored marketing strategies.

- Competitive Advantage: Virtual fitting room applications that are a custom fit for your brand can provide features that competitors don’t have, setting your brand apart. This also leaves room for you to experiment with novel features and technologies that can keep you ahead of the curve.

How to Build a Custom Virtual Fitting Room Platform

Creating a virtual fitting room app involves several steps, from planning and design to development and testing. It’s necessary to understand that the workflow might change depending on the chosen type of product and the scope of features you want to have in your app. Here we focus on some technical aspects of the development process.

There are several approaches that you can use to build a custom virtual fitting room platform. How you choose to develop your application depends on your objectives, vision, and product line, so no one method is best for everyone.

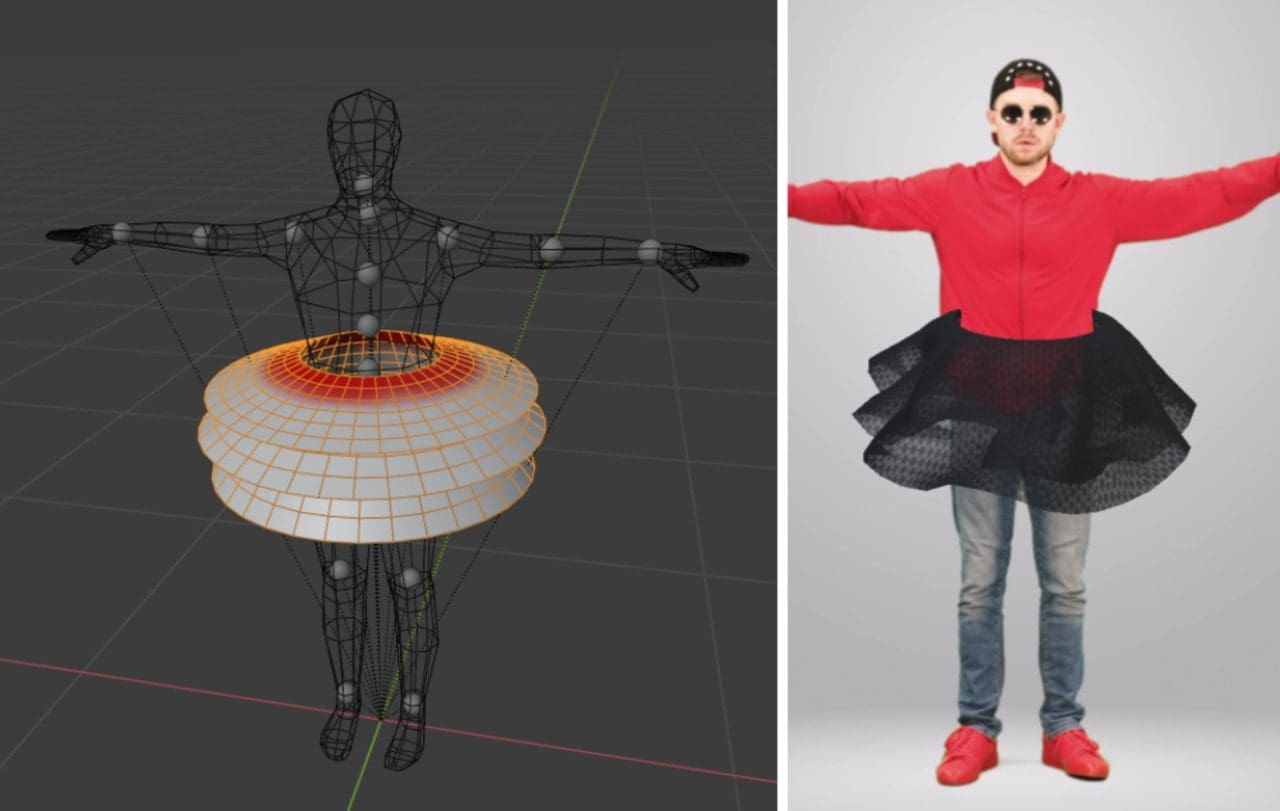

Approach #1: 3D Models of Clothes and Body

In this approach, we’ll digitize both the clothes and the body. Here’s how it will work:

- We’ll create a 3D mesh for the user from a video.

- From a preset of 3D reconstructions, we’ll choose the necessary clothes.

- The clothes are scaled and placed on the defined landmarks on the 3D wireframe of the user.

Working with cloth mesh (Source)

Approach #2: Latent Diffusions and Their Variations

Another approach for virtual fitting room development utilizes the latest diffusion models and their variations. Here’s how it works:

We’ll use a stable diffusion inpainting pipeline tailored for custom clothes. To set up the model, over fifty pictures of clothes from different angles need to be gathered, along with their masks and text descriptions. Only a dataset with clothes images is needed, as masks and descriptions are preprocessed during development. We’ll link each clothing piece to its text description. The input interface includes:

- A picture upon which we will change clothes

- The mask where the change is happening

- A description to match the clothes we want to add

Approach #3: Pose Estimation and Body Segmentation Models

Suppose we’re talking about virtual fitting rooms with body measurement features. In that case, we need to use pose estimation models to detect body key points and image segmentation models (more precisely Body Segmentation) to create masks of the human body in pixels to convert this information further to centimeters/meters.

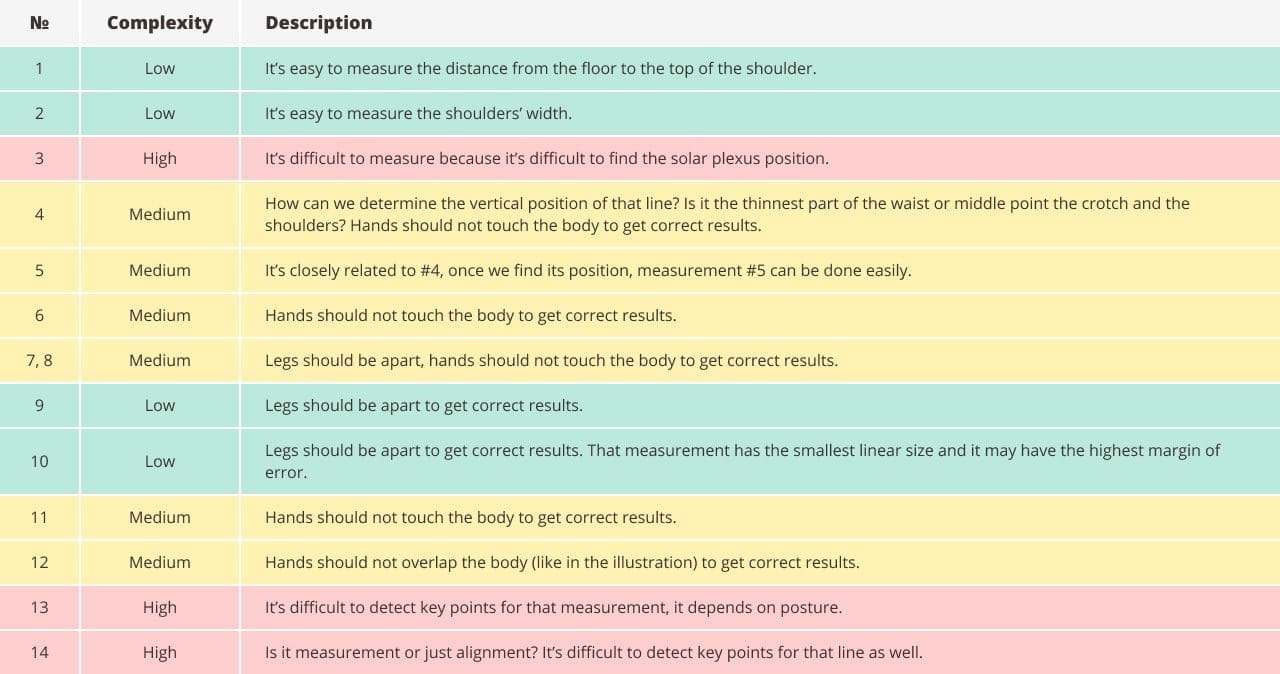

However, in our experience, it’s necessary to remember that the accuracy of body measurements for different body parts varies widely.

The last step is developing a pipeline that is based on the results provided by the models, as we need to calculate the required body measurements. For better results, it’s possible to combine AI measurements and manual measurements.

The choice of technical approach usually depends on your goal, resources, and limitations. Every virtual fitting room product is unique and requires a specific outlook.

AI consulting services can reduce uncertainty when it comes to investing in virtual fitting room development. After studying your requirements, our experts can analyze your idea’s feasibility and develop a detailed plan to execute it. We then can outline the technologies needed to easily move into the development stage and bring your vision to market.

Interested in AI consulting?

Let's explore the most effective strategies for bringing your idea to life

Learn moreVirtual Fitting Room Development Challenges and Ways to Overcome Them

There are several challenges that brands and developers may face when developing virtual fitting room applications. Let’s explore some of those challenges and how to manage them.

Processing Power and Performance

Real-time simulations can be resource-intensive, leading to performance issues on lower-end devices. To get around this problem, it’s important to optimize algorithms and 3D models to reduce complexity without sacrificing quality. Utilize cloud computing with GPU resources for heavy processing tasks, allowing devices to manage lighter workloads.

Body Tracking Accuracy

Accurate body tracking is essential for a good fit but can be affected by lighting, background, and camera quality. To mitigate these issues, we can use advanced computer vision and machine learning algorithms for better body detection and tracking. Additionally, implementing calibration steps to help users set up their environment for optimal tracking conditions can enhance accuracy.

Dynamic Clothing Simulation

Simulating how clothing moves and drapes in real-time can be complex due to varying fabric properties. To address this challenge, leveraging physics engines that can accurately model cloth behavior will enable realistic simulations of movement. Creating a comprehensive database of fabric properties ensures that different materials are simulated correctly, providing a more authentic virtual fitting experience.

How MobiDev Can Help You Build a Custom Virtual Fitting Room Solution

Building virtual fitting room applications isn’t easy. It requires a combination of design, technology, and UX-centered development. Not only do you have to make the virtual dressing experience accurate and visually appealing, but you also have to make it accessible and fun to use.

Whatever strategy you take, MobiDev’s AR and AI teams have the expertise you need to bring your product to market. Our AI consultants can help you choose the most suitable technologies to develop a product that meets your needs and expectations. Get in touch today to make the next step forward.