Contents:

As stated in the Grand View Research 2025, the U.S. self-checkout systems market size was valued at USD 1.91 billion in 2024 and is projected to grow at a CAGR of 12.0% from 2025 to 2030.

The idea of using fully-automated checkout with computer vision is a successful example of retail automation. But it requires an integrated software infrastructure, as well as imposes development and financial challenges.

In this article, we’ll explore how to build a self-checkout product, implement an AI-driven automated checkout process in retail, examine the options for cashier-less checkout automation, and address the challenges that need to be overcome.

Computer Vision for Automated Checkout

The majority of in-store operations like shelf management, checkout, or product weighing require human supervision. Human productivity is basically a performance marker for the retailer, and it often becomes a bottleneck, as well as becoming a customer frustration factor.

Namely, checkout queues are the pain point both for customers and retailers. But it’s not only the queues, since actual human effort costs money. So how does computer vision apply to these operations?

Computer vision (CV) is a technology under the hood of artificial intelligence that enables machines to extract meaningful information from the image. At its core, computer vision aims at mimicking human sight. So analogically to an eye, CV relies on camera sensors that capture the environment. In its turn, an underlying neural network, it’s brain, will recognize objects, their position in the frame, or some other specific properties (such as differing a Pepsi can from Dr. Pepper can).

That’s our ground base for understanding how computer vision can fit brick and mortar retail tasks, as it can recognize products situated in the frame. These products can be placed on the shelves, or carried by the customers. Which allows us to exclude barcode scanning, cash register operation, or self-checkout machines.

Although implementations of computer vision significantly differ by complexity and budgeting, there are two common scenarios of how it can be used for retail automation. So first let’s look at how full store automation can be built.

Option#1: AI-powered automated self-checkout: full store automation

Autonomous checkout is called by different names: “cashier-less”, “grab-and-go”, “checkout-free”, etc. In the shopping experience of Amazon, Tesco, and even Walmart, such stores check the products during the shopping, and charge for them when you walk out. Sounds simple, and that’s how it works in a basic scenario.

Shopping session start. Shops like Amazon use turnstiles to initiate shopping via scanning a QR code. At this point, the system matches the Amazon profile and digital wallet with the actual person entering the store.

Person detection. This is the recognition and tracking of people and objects done via computer vision cameras. Simply, cameras remember who the person is, and once they take a product from the shelf, the system places it into a virtual shopping cart. Some shops use hundreds of cameras to view from different angles and cover all the store zones.

Product recognition. Once the person grabs something from the shelf, and takes it with them, cameras capture this action. Matching the product image on video with the actual product in the retailer’s database, the store places an item into a virtual shopping cart.

Checkout. As the product list is finished, the person may just walk out. When the person leaves a zone covered by cameras, computer vision considers this as the end of a shopping session. This triggers the system to calculate the total sum, and charge it from the customer’s digital wallet.

From the customer standpoint, such a system represents a similar shopping experience as it is in the online stores, except you don’t need to checkout. Enter, find what you want, grab it, and leave. Although, to provide customers with full autonomy, and cover all the edge cases, we’ll need to solve a large number of problems technically. So what’s so complex about autonomous checkout?

Top 3 Challenges of AI-powered automated self-checkout

Customer behavior can be unpredictable, as we are going to automate checkout for dozens of people that check and buy thousands of products at the same time. This imposes a number of challenges for computer vision:

1. Continuous Person Tracking

As the customer enters the store, the system should be able to continuously track them along shopping routes. We need to know that it’s the same person who took this or that item in different parts of the store. In a crowded store, continuous tracking might be difficult. As long as it’s not allowed to use face recognition, the model should recognize people by their appearance. So what will happen if somebody takes off his coat, or carries a child on shoulders?

To enable continuous tracking, we’ll need to provide 100% coverage for cameras to detect people passing from zone to zone. Placing cameras at different angles, we also need sensors to communicate their precise location, so we can use this data to track objects more accurately.

2. The “Who took what?” problem

Then, we have to remember there are also products, right? And customers’ shopping process is not linear. They move items, smell them, put them back, and go to another shelf. Especially when there are multiple people at one shelf, it becomes difficult for a model to recognize who took what, and if they actually took the product to buy.

This problem can be solved by implementing human pose estimation and human activity analysis. Basically, that’s another layer of artificial intelligence coupled with computer vision. What it does is it measures the position and movement of a person, to predict what he or she grabs, and if the product was taken to be purchased.

This solves the problem with multiple customers at a shelf, and helps to denote who took this specific product even if the camera was blocked by somebody.

3. Identifying similar products

Concerning products, we’ll also need to deal with similar packages. Some products have minor differences in their look, which makes it harder for the model to fetch all the detail. Especially if there is some obstruction going on in the frame, or the object is moving fast. We can address this issue through training the model to spot little details, and use cameras with higher resolution and frame rate.

While it looks beneficial to use autonomous checkout, the complexity of such a system can be onerous. For a tech-first company, this is not a problem. But for the usual retailer, the burden brought by artificial intelligence lowers the value of such automation. That’s why partial store automation with computer vision can be more suitable.

Option#2: Smart Vending Machines: Partial Store Automation

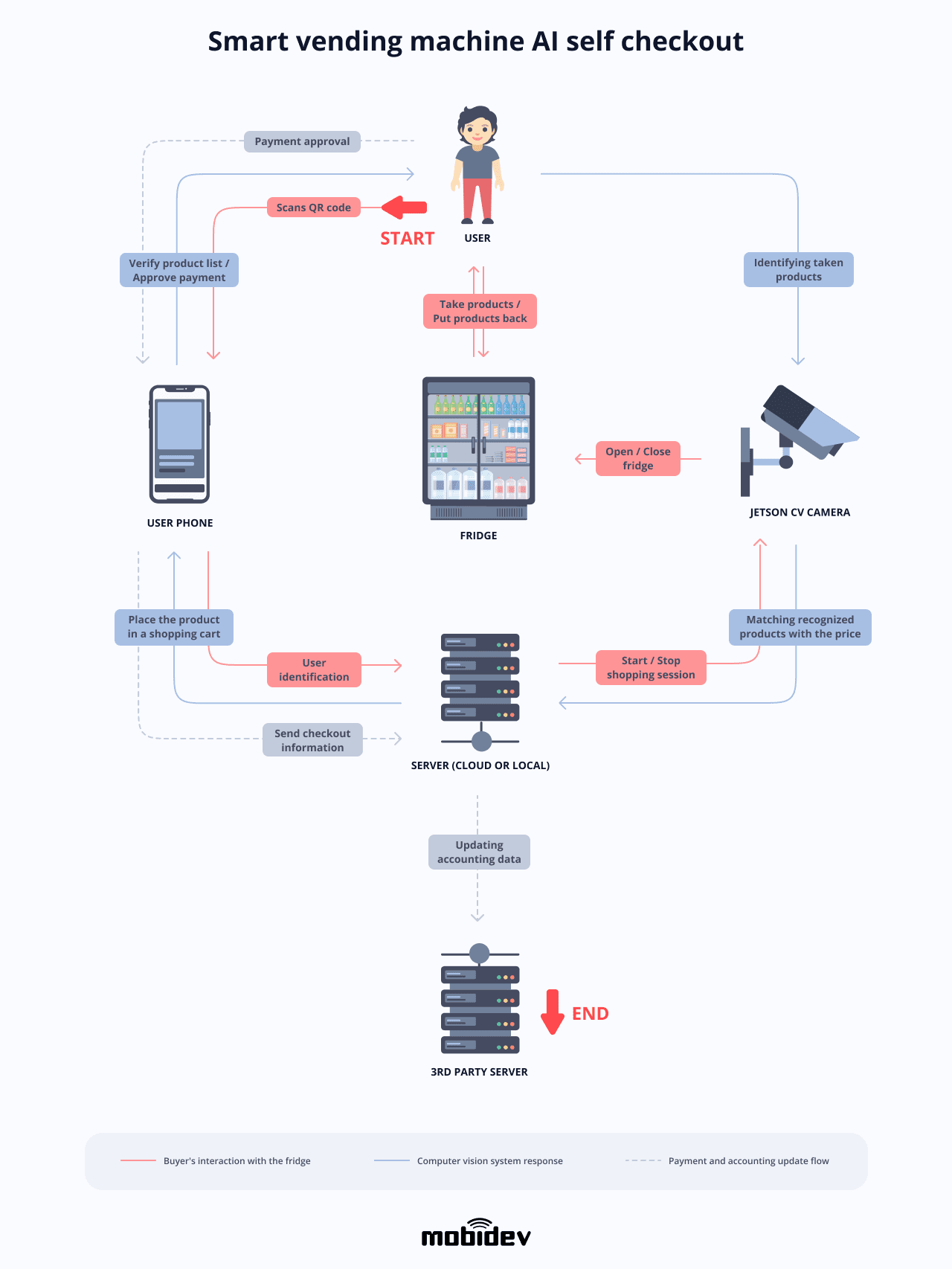

When it comes to vending machines, they can be placed in-store, or moved out to other indoor and outdoor locations. And this can be an elegant solution to the problem imposed by tracking the whole store. Vending machines can be represented by shelves with glass doors or regular fridges using computer vision cameras to operate purchase processes. Installing a QR code scanner, we can minimize the checkout procedure to the location of a single fridge. So the idea is quite simple:

Shopping session start. The session starts once a person approaches the fridge and opens it up. This can be done via scanning a QR via mobile app if it’s a door-closed fridge. In the case of a usual shelf, cameras can track what’s grabbed from it to initiate the session.

Creating a virtual shopping cart. As the person scans the QR code, it’s a signal for a system to create a shopping cart for this specific user.

Product recognition. The cameras might be installed inside or outside of the vending machine. The internal cameras should be able to track the taken/put back products. External cameras might track manipulations within an open fridge, just like with a regular shelf. Both types of cameras capture the products and put them into a shopping cart.

As the person might examine multiple items and move from side to side, CV cameras can also track the person in the frame. This will help us verify that it’s a single person making a purchase, and not another one standing nearby.

Verifying products. When the product is taken, the system sends this data to compare the image of the product with the one in the database and extract the price. Additionally, we can update availability automatically in our inventory management system.

Editing product list. Once the products are taken, they will be sent to the user’s shopping cart available on their smartphone, or tablet on the fridge. Here, the customer can modify items, and proceed to the payment.

Checkout. In case of a mobile application and QR code scanning, closing the fridge might be a trigger point to complete a purchase and charge a sum from a digital wallet. But, there might also be a POS terminal installed to allow credit card payment. At this point, the purchase is done, and the person can leave the store.

While it looks like a relatively weak alternative to the autonomous checkout system, vending machines can be scaled easily to automate the whole store. Which makes a little difference in terms of customer experience, but requires less engineering effort and budgeting.

The same concept of modular automation can be applied to numerous other cases. Except for supermarkets and grocery stores, computer-vision kiosks can also be installed in food service venues or coffee shops.

Cashierless food service

Restaurants, cafes, and canteens often use a buffet serving system like a sideboard with portioned dishes customers can choose from. Customers place dishes on trays, then need to check out their order, which can potentially be handled by a computer vision kiosk.

A machine learning model sitting on the backend can be trained to recognize dishes and other products placed on the tray to launch the checkout process. This idea can be implemented as a checkout kiosk where a set of cameras will scan the order. The actual payment can be completed via a usual POS terminal, or using a mobile application and a digital wallet.

The concept of cashier-less operations can be taken to extremes like with Starbucks. Using Amazon’s system, Starbucks became the first of a kind grab & go coffee shop. Customers can place an order via a mobile application and come for their coffee without any checkout similar to Amazon GO. However, handling computer vision projects requires knowledge of a subject matter. Specifically, data science and machine learning expertise.

So now let’s talk a bit of what you should know to approach computer vision-based checkout automation.

5 Steps to develop an AI-based automated checkout software

Based on our experience, let’s examine the steps it takes to create a computer vision system for checkout automation in retail. We’ll focus on the smart fridge case as the most approachable and versatile one.

1. Gathering requirements

First of all we need to understand our business case in detail:

Preferred automation method. Choosing between smart fridges or other types of dispenser machines might require less global modifications to the store, while maintaining a scalable approach. Full store automation will mostly require changes to the venue layout, and additional hardware like turnstiles, which can be a con for the majority of the store owners.

Store size. Vending machines can be installed in basically any number, to cover all of the store’s inventory and product diversity. So the store size will determine how many vending machines you’ll need, and what will be the store layout using smart fridges for some part of products.

Quantity of products for recognition. As any other machine learning project, a computer vision system requires training before it can recognize anything. A single fridge might contain 20 to 50 different products. So we should consider those numbers as it will determine how long the training phase will take.

Existing infrastructure. In most cases, physical stores don’t have enough integration between inventory management, point of sale, and accounting. Although, computer vision systems will require access to the store data to automate sales updates and product availability. So examining your existing infrastructure is another point to understand when considering the requirements of this project.

So let’s say a single fridge can contain 35 items and we’ll focus on those numbers.

2. Data collection

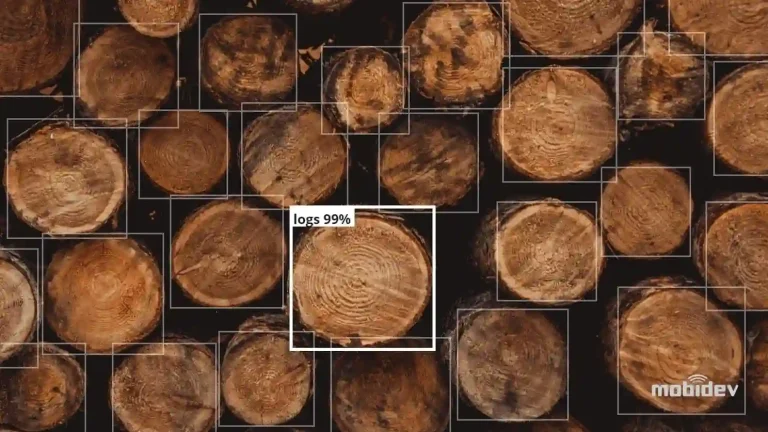

Computer vision is an artificial intelligence technology. Which means, we need data so it can recognize objects. The data is used for model training to identify different products in the frame, as well as identify people and what they grab.

The optimal way to collect data for object recognition is basically to record each product on video from different angles and lightning conditions. It is important to have these videos categorized by product, so the labeling (what product is in the frame) will be done automatically. General recommendations for gathering the data are that it should be as close as possible to how it will look for real users.

Once we implement a working model to automate checkout, we’ll need 60 frames per second. This is required to guarantee fast operation of the model. The higher the frame rate, the smoother the image is, and the more detail we can extract from it.

3. Model training

The next step is training. Once we collect all the video recordings, a machine learning expert will prepare them for model training. This process can be split into two tasks.

- Preparing data means we need to split all the video frames into separate images, and label the products we need to detect. Put simply, we extract 60 photos out of a minute long video, and draw bounding boxes around our target objects.

- Choosing an algorithm. An algorithm is a mathematical model that learns patterns from the given data to make predictions. For tasks like object recognition, there are existing working algorithms that can be applied for building a model. So our task here is to choose a suitable one, and feed it with our data.

The process of training may take several weeks, as we struggle to get decent accuracy.

4. Model retraining

If any products are added or swapped in the process, the model needs to be retrained. This is because prediction results will differ depending on the data input. This means that each time a store obtains new items for sales, and places them into a computer vision fridge — we’ll need to launch a new training phase for the model to learn new items.

Given that, we’ll need retraining to recognize, say, Pringles cans on the image if there weren’t any Pringles before. Although, this becomes easier as soon as we implement cameras in the fridge because we can use live recordings to make annotations and launch training again.

5. Required infrastructure

The existing infrastructure in the store is usually represented by a server that processes inventory updates, and records sales volume via POS terminals. To implement a machine learning model, we’ll need to add several components:

- Cameras to record and pass the visual data.

- Video processing unit. This can be a video card or a single board computer like the Nvidia Jetson that includes a GPU optimized for computer vision needs.

- QR scanner. This sticker is placed on a turnstile, or a fridge the user scans to identify the person and launch the shopping process.

- Model server. As we’re talking about real time video processing, implementing a hardware server at the store will guarantee more stable results. Basically, as a person grabs something from a fridge, the reaction of the system should be noteless so that hardware components can respond fast enough.

All of those components should be interconnected, as there has to be data flow between each unit. As for the cameras, we also want to make sure the store has a stable and fast bandwidth. Since cameras will process live streams of data in the real time, there has to be no delay for the model to function properly. On the other hand, the customer will expect a fast reaction of the vending machine, which depends on how quickly the model receives and processes the data.

Privacy concerns

Among other questions that might concern both retailers and customers is privacy. Since computer vision is designed to detect and track objects on video, recording and storing such data may violate the privacy laws in some countries.

Although, in the US it’s generally legal to use surveillance cameras in stores. As long as customers are tracked with random IDs just for the sake of the checkout task, no other technologies like face recognition are required. And even if the camera captures a person’s face it could be blurred using AI to sustain confidentiality.

Is AI self-checkout for every retailer?

All with all systems, automated checkout may seem like a pricey and bulky thing to implement. However, 79.3% of consumers use self-checkout regularly. That being said, vending machines might be an affordable option for the retail industry, as it brings a lot of benefits for a reasonable cost. Additionally, such systems can be customized to serve the specific needs of a given retailer due to flexibility of machine learning models. Basically, any type of product can be recognized with proper training. So convenience stores are not the only ones who can benefit from computer vision applications.

Want to develop your AI-driven automated checkout product? Consider MobiDev as your consulting and engineering provider.

The MobiDev team has been developing software products tailored to the needs of retail industry since 2009. We have a proven track record of delivering custom built solutions that support strategic business goals.

If you need help with development of an AI self-checkout SaaS or implementation of a custom AI automated checkout system in your retail venue, you can count on our AI consulting and engineering services to achieve your goals. Contact us to discuss your idea!